The Grokking of Life on Earth: Evolution, Intelligence, and the Next Phase of AI

Fire was unthinkable to early life on earth ... AI was unthinkable to the first humans ... what chance do we have of predicting what comes next?

Tracking Artificial Intelligence, Climate Change, Renewable Energy & Biotechnology - interactive data, plain-English explainers & sourced articles.

Fire was unthinkable to early life on earth ... AI was unthinkable to the first humans ... what chance do we have of predicting what comes next?

The human brain is the most energy efficient computing system ever studied. It runs an entire conscious lifeform (perception, memory, language, emotion, movement) on between 12 and 20 watts of power. Roughly the same draw as charging a smartphone. Switzerland's Blue Brain Project estimated that simulating the human brain's full processing in real time would require approximately 2.7 billion watts. This is equivalent to the combined output of three nuclear power stations - enough electricity to supply a large city.

For most of us, the smartphone has taken over ... camera, map, wallet, calendar, alarm clock, newspaper, the 'fountain of all knowledge' in our pocket. Now it's being asked to become something even bigger ... our personal AI assistant, apparently capable of doing almost anything better than the average human.

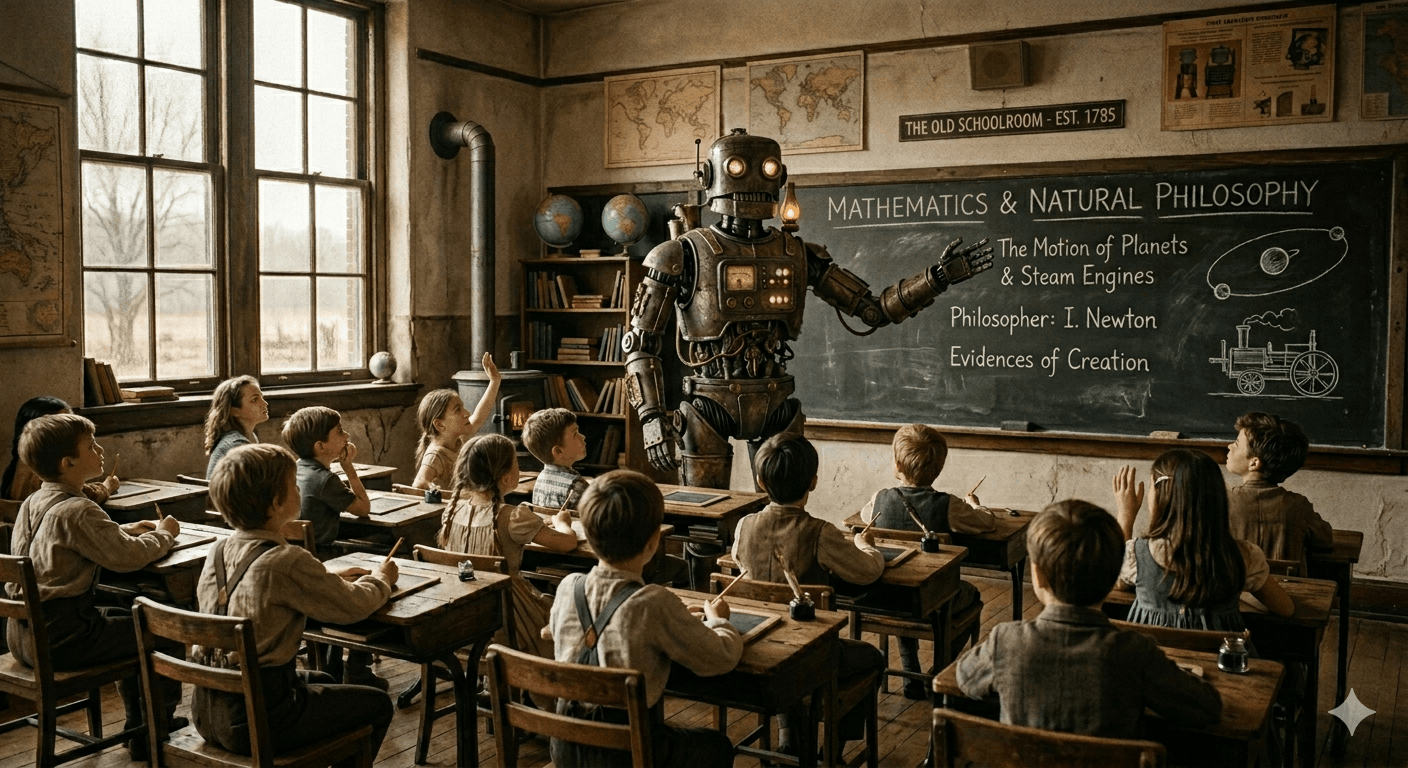

Here is the argument this article makes. AI is not simply changing education. It is creating the conditions under which significant numbers of young people will choose to route around it entirely. They will use AI to learn faster, more cheaply, and arguably more effectively than the traditional system allows. Forward-thinking employers will not merely tolerate this. Many will actively prefer it. And the institutions that do not adapt quickly enough will find themselves not reformed, but ignored.

A learning journey into alignment, global regulation, and the risks most people haven't heard of. From the safety engineering already baked into ChatGPT, Gemini and Claude, to killer robots, sovereign AI, and the question of who gets to decide how these systems behave.

The chatty narrator in our heads (frustrating, anxious, occasionally brilliant, and usually arriving after the decision has already been made) remains one of the things that most distinctively separates us from the most capable machines we have ever built.

If we want to build a general intelligence that can operate in the real world, whether that is driving a car, performing surgery, or building a house, it must understand embodiment. The physical world is entirely built on constraints, resource management, and cause-and-effect.

The tools most people think of as AI today, ChatGPT, Gemini, Claude, are language models. Extraordinarily capable ones. But there is a ceiling. The next phase of AI is not about predicting the next word. It is about understanding the world the word is trying to describe.

We’ve been waiting for a single, god-like "Superintelligence" to emerge. But new research from Google’s Paradigms of Intelligence team suggests we were looking for the wrong thing. It turns out AI isn't becoming a single meta-mind. It’s becoming a city.

In 1950, a mathematician working in Manchester typed a question that has refused to go away: Can machines think? He was Alan Turing. The man who had already cracked the Enigma code, helped shorten a world war, and laid the theoretical foundations for the modern computer. By any measure, he had earned the right to ask big questions. And this was about as big as they came.

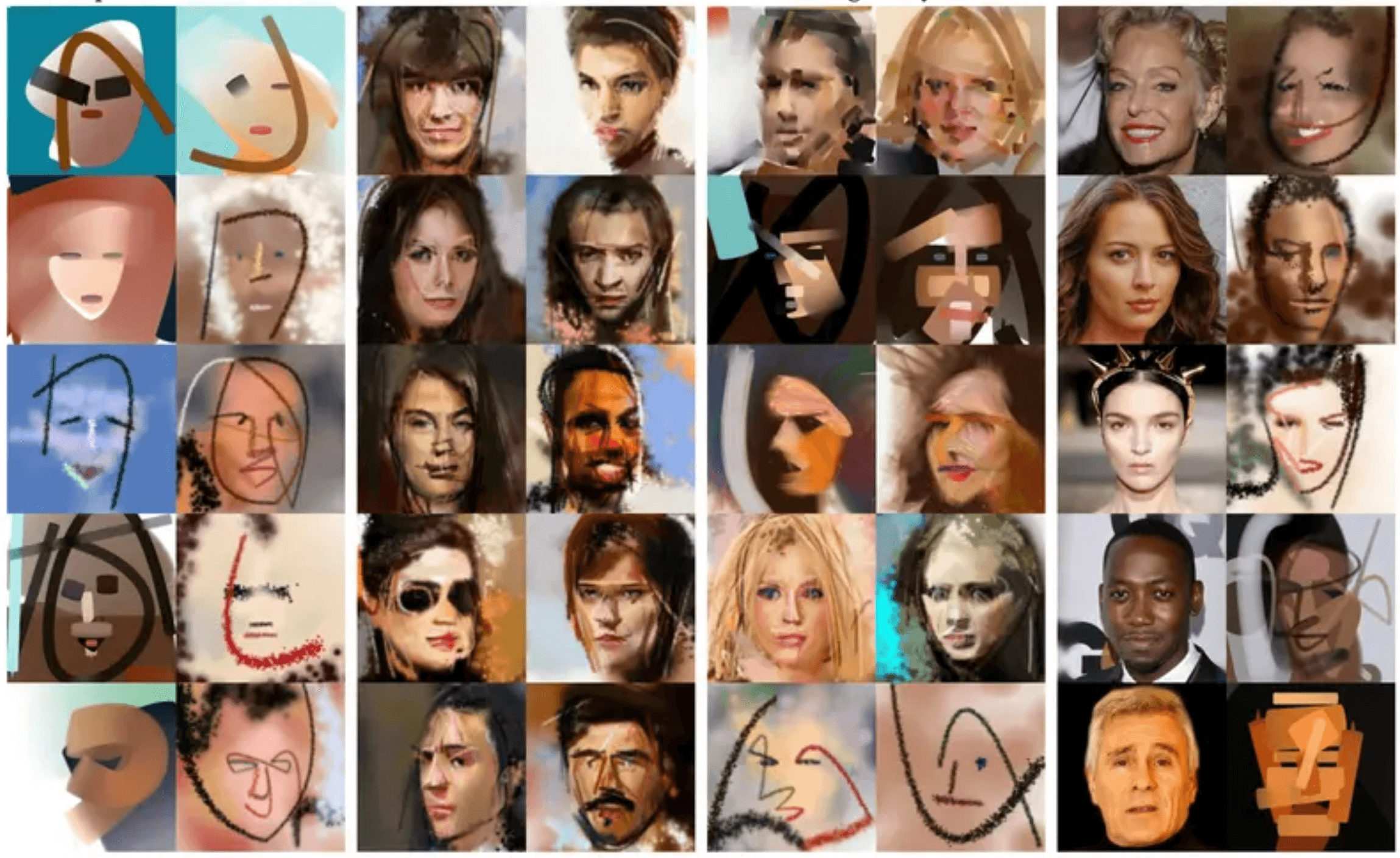

AI language models have no senses. No body. No real-time input from a living world. They learn from text, every book, article, tweet, and forum post we have collectively produced, and from that they infer, blend, and extrapolate. They are, in a sense, a distillation of what humans have already written about being human. Which means they are, at best, one step removed from the experience itself.

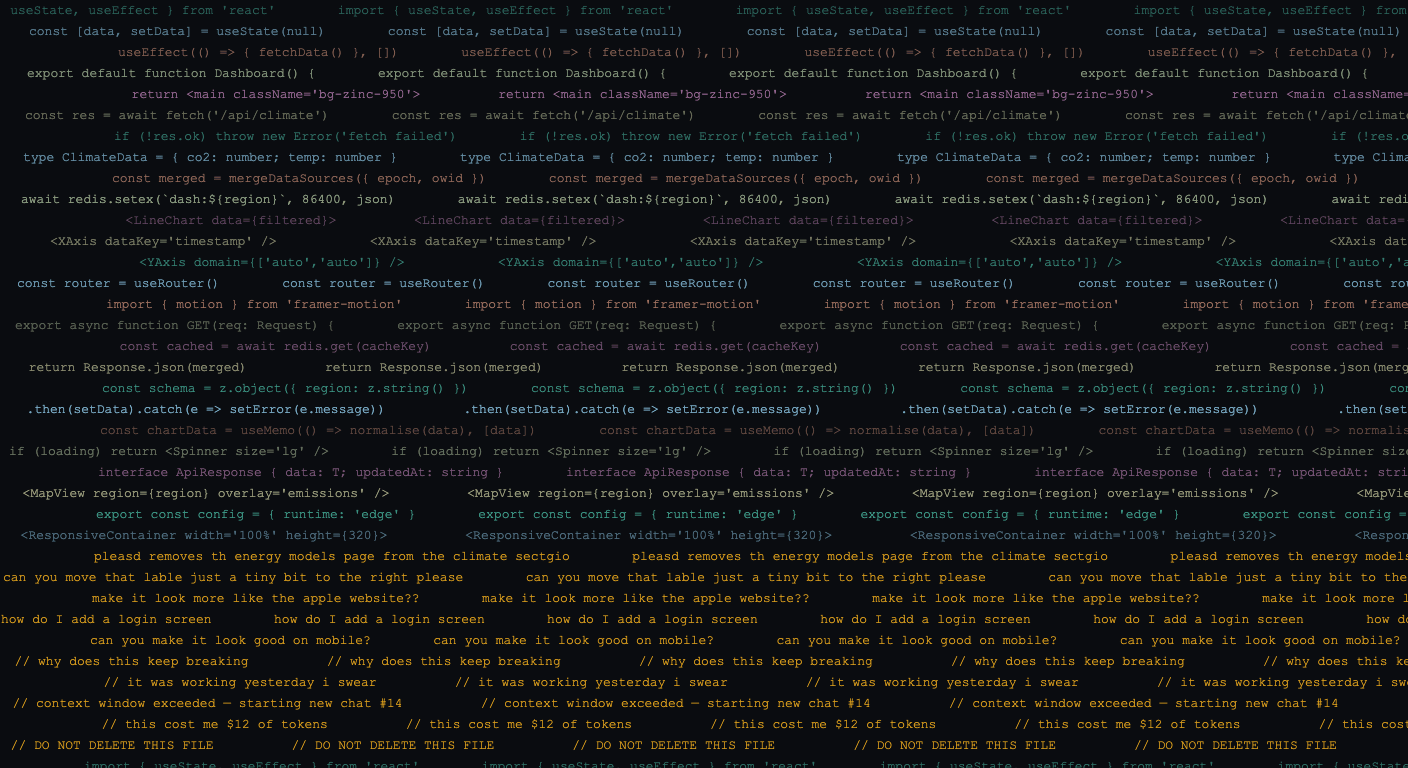

Vibecoding is not magic. It will not make you a developer overnight, and you will spend meaningful portions of your time sitting anxiously in front of a progress bar, wondering whether the AI is about to delete something important. But it is genuinely, surprisingly powerful. It is fast. It costs less than a decent night out. And it produces things you could not have produced alone.

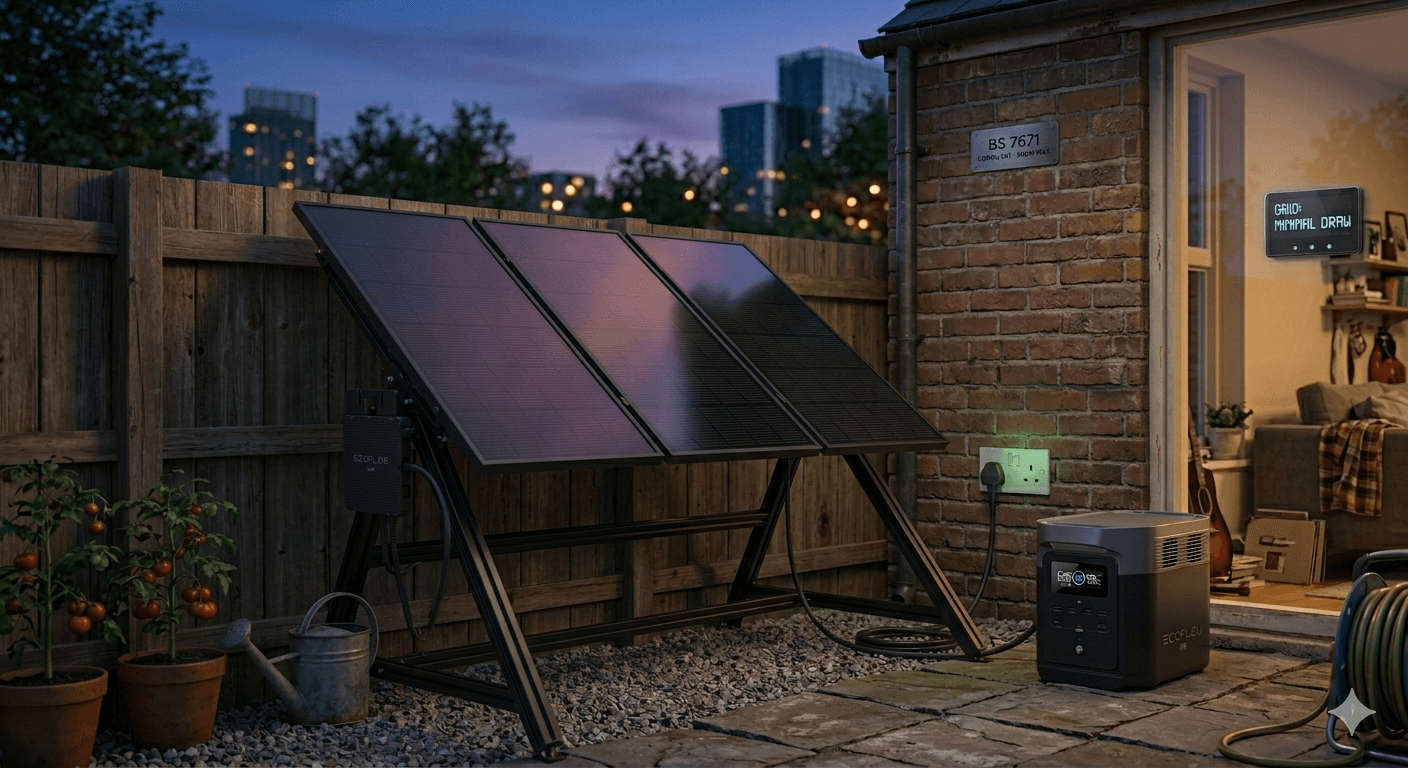

In the 4 billion years since our planet began its long series of revolutions around the sun, humanity has mostly been a passive observer of solar energy. We’ve watched the seasons shift, but only recently have we learned to "plug in" to that cosmic engine.

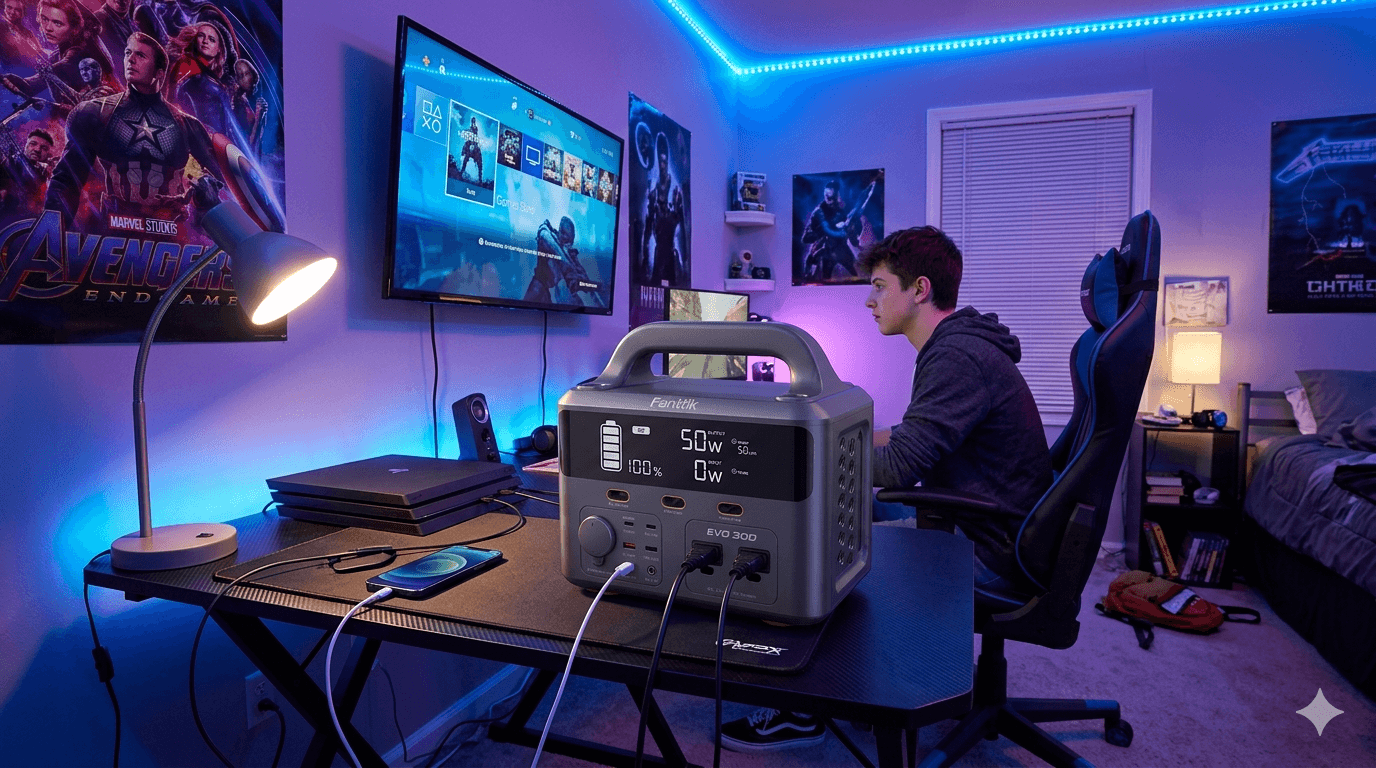

Millions of teenagers are genuinely worried about climate change. Some are doing the big things - protesting, going vegan, badgering their parents to switch energy supplier. But one quietly practical idea is gaining traction: a plug-in portable battery bank, charged overnight on cheap electricity, used to power your bedroom setup during the evening peak. Does it actually reduce carbon emissions? And does it save any money? We ran the numbers for both the UK and the USA.

The UK is building one of the world's most ambitious battery storage networks, yet its grid operator has just awarded zero contracts to battery projects in a critical stability auction - despite spending £323 million proving the technology works. It is the latest chapter in a story of institutional systems failing to keep pace with the clean energy transition.

If we accept that the inner voice is just a byproduct of idle processing, we suddenly have a way to detect consciousness in entities that cannot talk back. Instead of asking a subject "Are you aware?", we measure ...

Spoiler Alert: Occludons mediate the “Force of Isothermal Occlusion,” and the universe is actually just a vast thermodynamic filter. Okay, that’s not proven yet. In fact, I have absolutely no idea if it’s a valid scientific idea. But it is novel, and it wasn’t ripped from the pages of a sci-fi novel. It came from a chat with an AI.